Alright so you’ve probably heard about this by now. Australia — yes, the whole country — passed a law saying nobody under 16 can use social media. Not “we recommend limiting screen time” or “please be careful online, kids.” A full-on legal ban. Accounts gone. Platforms scrambling. Parents confused. Teenagers furious.

I want to talk about what’s actually happening because most of what I’ve read online either oversimplifies it or turns it into a culture war thing, and it’s neither of those.

If you don’t want to read all of this

Australia banned under-16s from social media. Law passed late 2025, enforcement ramped up January 2026. The big platforms — Facebook, Instagram, TikTok, Snapchat, YouTube — are all affected. It’s messy. That’s the summary.

How we got here

This didn’t come from nowhere. Parents in Australia (and everywhere else, let’s be honest) have spent the last five or six years watching their kids get swallowed up by these apps. And every time they looked into it, the research backed up what they were seeing at home. More time on social media, more anxiety. More depression. Worse sleep. Kids comparing themselves to filtered versions of strangers and feeling awful about it.

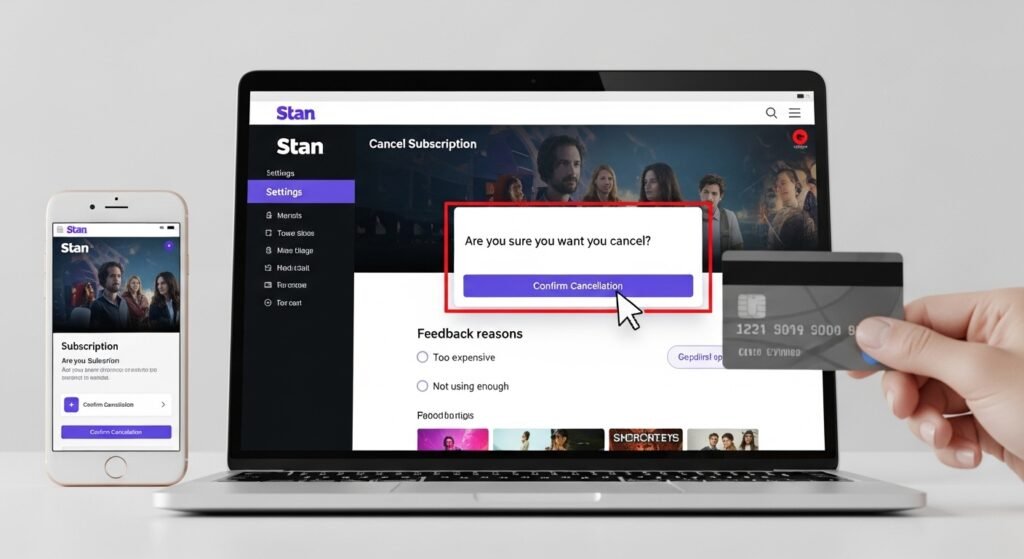

The platforms kept saying they cared. They’d put up a little checkbox — “confirm you’re 13 or older” — and act like that was meaningful. My neighbour’s kid had three Instagram accounts by the time she was 12. Three. She just typed in a fake birthday each time. Took her about four seconds.

Eventually the government got fed up waiting for Mark Zuckerberg to grow a conscience and decided to just… legislate. Was it the perfect response? No. But I get why they did it. When you’ve got a pile of studies a foot tall and the companies responsible keep shrugging, what else are you supposed to do?

The platforms and where they stand

Quick rundown because people keep asking.

Meta (that’s Facebook and Instagram) is technically complying but doing the absolute minimum they can get away with. Classic Meta. TikTok keeps announcing changes that somehow never fully materialise. Snapchat’s in this weird limbo where nobody seems sure what’s happening. And YouTube’s tweaked some age settings but it feels more cosmetic than anything.

The law’s written broadly enough that other platforms could get pulled in too. Any app with an algorithm-driven feed and a bunch of young users on it is fair game. Right now some of the smaller ones are probably just hoping nobody notices them.

And it’s not just social media platforms feeling the heat here. The ripple effects are hitting businesses too — especially local ones that relied on these platforms to reach younger demographics. If you’re a business owner trying to figure out how to stay visible online when the rules keep shifting, working with a Google My Business expert is honestly one of the smarter moves you can make right now. At least that channel isn’t going anywhere.

Nobody knows how to verify age online and it shows

This is the part where the whole thing starts to fall apart a little.

You can write the strictest law you want. Cool. Gold star. But how are you going to stop a 14-year-old from typing “2007” into a birthday field instead of “2012”? Because that’s still basically all most platforms ask for. A birthday. On the honour system. In 2026.

There’s been some experimentation with those AI age estimation tools — you know, where you point your camera at your face and the software tries to guess how old you are. I tried one once and it told me I was 47. I’m 31. So there’s that.

But even if the tech worked perfectly, it raises a whole other problem. Now you’re asking children to submit biometric data — their face — to the very companies their parents don’t trust. The irony is thick enough to cut with a knife. The eSafety Commissioner (Australia’s online safety watchdog, basically) has called out multiple platforms for half-assing their verification. Which surprises absolutely no one.

Is it doing anything though

Here’s where it gets complicated.

On paper, yeah. Around 4.7 million accounts were flagged or removed by January 2026. That’s a big number. It’s also kind of horrifying when you think about what it means — that’s how many kids had accounts they shouldn’t have had. In a country of 26 million people. Nearly five million underage accounts. Wild.

But anyone who’s spent five minutes around a teenager knows that removing accounts doesn’t mean they’ve stopped using the platforms. Kids are borrowing parents’ phones. Lying about their age (still embarrassingly easy). Some of them have figured out VPNs, which — honestly, good for them in terms of technical literacy, terrible in terms of the law working as intended.

The government has admitted this isn’t finished. More enforcement is supposed to be coming. I’ll believe it when I see it, but at least they’re not pretending everything’s fine.

Not everyone’s a fan, and I get it

There’s a criticism of this ban that I keep coming back to. If you push kids off Instagram and TikTok, they don’t just stop being online. They go somewhere else. And “somewhere else” might be a sketchy app with no moderation, no reporting tools, and content that makes TikTok look like Sesame Street. You haven’t fixed the problem. You’ve just moved it underground.

That one keeps me up at night a bit.

There’s also the kids who genuinely benefit from social media. Not everyone’s doomscrolling reels about weight loss. Some teenagers are finding art communities, connecting with other LGBTQ+ kids when their town doesn’t exactly have a Pride parade, staying in touch with friends who moved away. For a kid on a cattle station three hours from the nearest town, Instagram might be their main social lifeline. Banning it outright feels like you’re punishing them for a problem they didn’t cause.

And yes — Meta and Snapchat have made these exact arguments. But they’re saying it because they’re about to lose millions of users, not because they care about rural queer teenagers. Still, a good argument is a good argument regardless of who’s making it.

Parents and teachers can’t just sit this one out

Something that bugs me about the coverage of all this — everyone’s focused on the law and the platforms, and nobody’s talking about what’s happening at the kitchen table.

Because here’s the uncomfortable truth: you could have the most sophisticated age verification system ever invented, and it won’t matter if a parent just hands their kid an unlocked phone and goes back to watching TV. The law can create guardrails. It can’t parent your children for you.

The more promising thing, honestly, is what some schools have started doing. Digital literacy programs. Teaching kids how algorithms work — not in a boring abstract way, but in a “this is literally engineered to make you keep scrolling and here’s how” way. Teaching them to recognise when an app is manipulating them. That stuff matters.

These kids are all going to turn 16 at some point. The ban doesn’t last forever. When they finally do get access to social media, wouldn’t it be better if they actually understood what they were getting into? The ban is a stopgap. The education is the real investment. Most of the headlines are about the ban. I think the schools stuff will end up being the part that actually works.

What’s coming

If the platforms don’t get serious about verification soon, they’re going to start getting fined. Big fines. The government’s thrown around numbers in the tens of millions. I don’t know if that’s a real threat or just posturing, but even the threat of it seems to have gotten some attention in Silicon Valley.

Other countries are circling. The UK’s been talking about it. France, I think. Canada. Australia’s basically the test subject right now — everyone else is waiting to see if the patient survives before they try the same treatment.

There’s also been chatter about extending the ban to newer apps. Because social media doesn’t stand still. By the time the government catches up to one platform, three new ones have popped up. It’s a whack-a-mole situation and the moles are getting faster.

I don’t think this law is going to fix everything. I don’t think anyone seriously expects it to. But it’s forced a conversation that needed to happen, and it’s made platforms sweat in a way that polite requests never did. That counts for something, even if the execution is still a mess.

Questions people keep asking me

What does the ban actually do? Stops under-16s from making accounts on major social media platforms in Australia. They’re going after the addictive feed stuff specifically — the endless scroll, the algorithmic content, the “just one more video” design.

When did it start? Passed late 2025. Enforcement got real around January 2026.

Which platforms? Facebook, Instagram, TikTok, Snapchat, YouTube. The law is vague enough that others could be added.

Who’s in charge of making it work? The eSafety Commissioner. They check whether platforms actually have real verification systems. Mostly they’ve found that platforms don’t.

Why’d they do it? Because study after study kept showing social media was messing with kids’ mental health, and the tech companies weren’t doing anything meaningful about it on their own.

Can kids still go online? Yeah, nobody banned the internet. School websites, family group chats, educational stuff — all fine. It’s the social media feed experience specifically that’s targeted.

Is it working? Millions of accounts removed, so partially. But kids are resourceful and the verification tech is still pretty weak. It’s a start, not a finish.

Will my country do this too? If you’re in the UK, Canada, or parts of Europe — maybe. A lot of governments are watching how Australia’s experiment plays out before committing to anything.