AI pretty much feels like magic until you really analyze it. It’s mostly made up of math, data, and a lot of trial and error. Basically, if you’ve been asking yourself how does ai work, you are actually asking: “How does the computer find patterns and then make decisions based on them?” This article breaks it down in simple words and examples that you might have seen today.

What is AI?

AI is a collection of methods that enable computers to discover patterns from data and eventually predict, decide, or visually depict something useful.

Is AI “smart” like a human?

Not quite. Most AI operates narrowly. It excels remarkably at one specific task but is totally lost when you take it away from that task.

How does it learn?

Essentially, it involves being fed with many training samples, making mistakes, correcting oneself, and repeating the whole process over and over again.

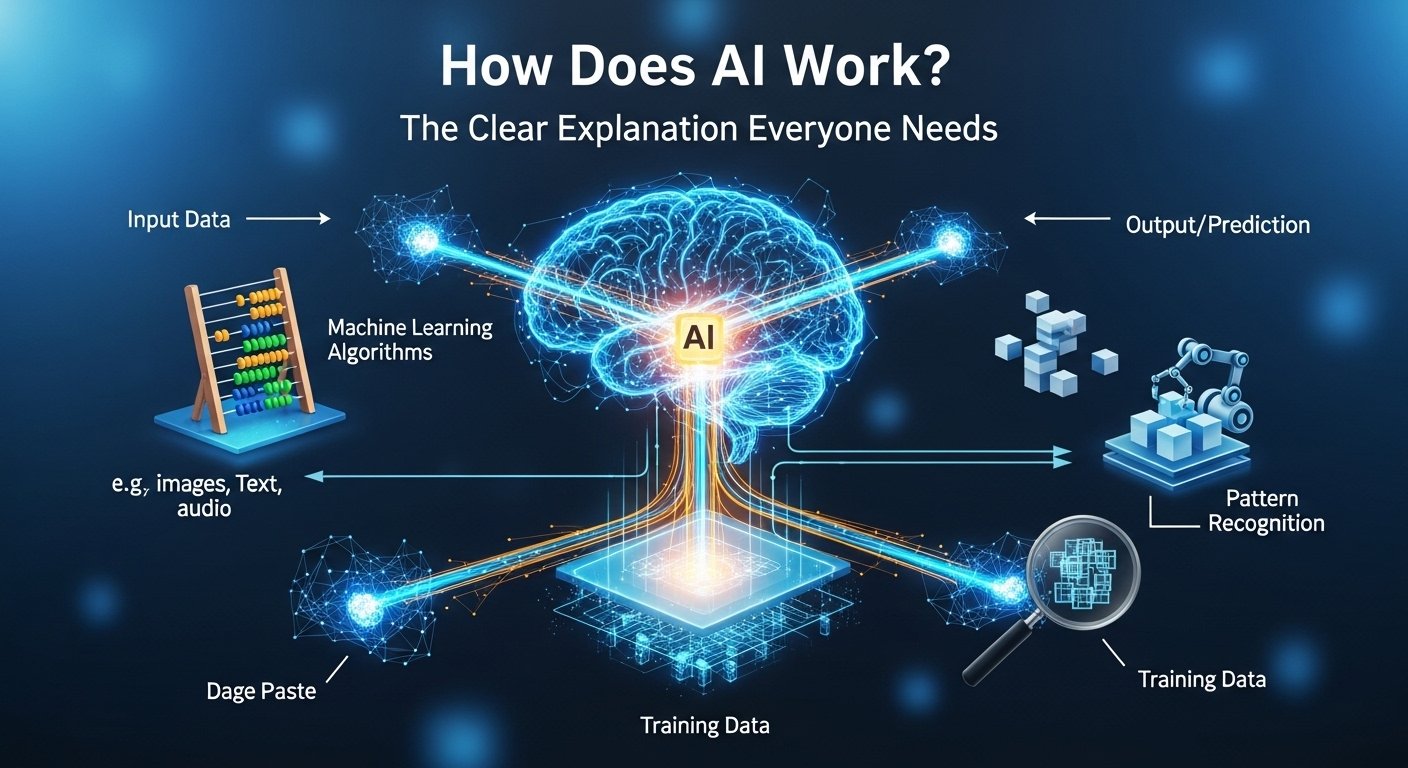

How it works

When people refer to “AI,” what they generally mean is a pre-trained model derived from data. Training is the phase where learning happens. The model is shown instances, makes the focus of judgment, and is corrected by the one training it. This back-and-forth process is at the heart of how AI and automation systems learn to perform tasks that once required human input at every step.

Later there is the “using it” phase—known as “inference”—when the model utilizes the knowledge it has acquired to process new input data. So, you give it a photo, sentence, or voice note it has never seen before, and it generates an output. This is where AI powered tools prove their real value—quietly working in the background, handling volume, and delivering results without demanding constant attention. It’s also the stage where AI automated processes start to feel seamless, since the system is no longer learning from scratch but applying what it already knows.

The model at its core is a math function with tens (sometimes hundreds) of millions of parameters that can be turned. It can be understood as knobs that can be turned to effect a change. Training is rotating these knobs in such a way that the model is making fewer mistakes.Hence the huge emphasis on data quality. If the examples are poorly annotated, and biased, then the model will learn the same biases and even produce nonsense confidently at times. It’s not a bug; rather, it’s biological processing and prediction that way is a side effect of it. AI is not the truth machine but a very potent one who pattern machine.

How does AI work in simple terms

Think of the process of teaching a child to identify kangaroos. It isn’t like formulating the rule – “if tail length is X and ears are Y.” Instead, you show many pictures—kangaroos, wallabies, dogs, people in hoodies, and even random backgrounds.The child gradually visualizes the features which most frequently co-occur with a kangaroo – the strong legs, long tail, shape, and posture. The result won’t be perfect but the child will become more and more proficient with additional samples and correction.

Traditionally, AI training is done in a similar fashion. A model is presented with the examples, it makes an attempt at labeling them and then gets feedback. The internal parameters undergo slight adjustments when it is wrong. After training, the model is able to identify new photos of kangaroos that it has never seen before. Not because it “understands” kangaroos, but because it has learned a statistical pattern that generally corresponds to what makes a kangaroo a kangaroo — thanks to the principles of intelligent AI that power modern machine learning systems.

Hence, when somebody asks how does ai work in simple terms, it basically is: show many examples → learn patterns → use patterns on new things. The concept is simple. But the scale is huge.

How does AI work for dummies

No offense meant. This is the simplest version that you could describe to a friend over coffee.AI could be likened to a super-fast intern who, having read a million documents and seen a million examples, nevertheless cannot always tell what matters the most. The intern’s guess is based on what he has seen most frequently.Training is like practice tests. The AI gives the answer, the teacher scores it, the AI improves. Repeat that millions of times. It becomes quite proficient.

However, that still does result in strange errors although the AI does not “think” as a human would. It does not have a life story, common sense, or experiential understanding of the world by default.It is also highly sensitive to the phrasing of questions. If you ask the same question but in two different ways, you might get two different answers. Since the AI interacts with patterns in the text, the rule is not a single and fixed one.So, here’s your genuine “dummies” takeaway: AI learns from samples, predicts what it thinks is the next best output, and improves via feedback. That’s all.

The rest is for the engineers to decipher.

Data: the part everyone skips, but it’s the whole game

AI is to data what a car is to gasoline. Unlike gasoline, however, data can be dirty, incomplete or misleading. For instance, a model trained on clean and balanced data will behave vastly differently from one that was trained on chaotic and biased data.Data can either be structured (e.g. tables, labels, and numbers) or unstructured (e.g. text, images, audio, and video). Most of what is out there is unstructured, which is why contemporary AI is highly dependent on methods capable of dealing with messy inputs.You will occasionally require labels as well—”this is spam” or “this is not spam.” That’s considered supervised learning. But you can also work out patterns without labels, which is unsupervised learning.

Besides, you have reinforcement learning, wherein the model learns through trial and error and received rewards or penalties. This is far more like training a dog than labeling a spreadsheet.In Australia, you see data issues manifesting in various domains: banking, insurance, hiring tools, and even customer service automation. Poor quality data can silently contribute to unfair outcomes.So, yes, data is boring. But it is precisely there where AI either becomes useful or risky.

Models and algorithms: what “learning” actually means

This explains why deep learning is so effective for image and language data. It is capable of constructing elaborate representations automatically without the need for hand-engineered features. This same principle powers modern AI search engines that can understand context and intent behind queries. But there is a downside. Training can be really costly both in terms of finances and energy consumption. Therefore, the creation of large models is generally the domain of big organizations with the heavy computing resources.Most companies do not begin training at zero level but rather perform fine-tuning, customization, or even simply make use of the existing models with the necessary safeguards.Actually, that is often the best decision. You will have fewer egos and more results.

How does generative AI work

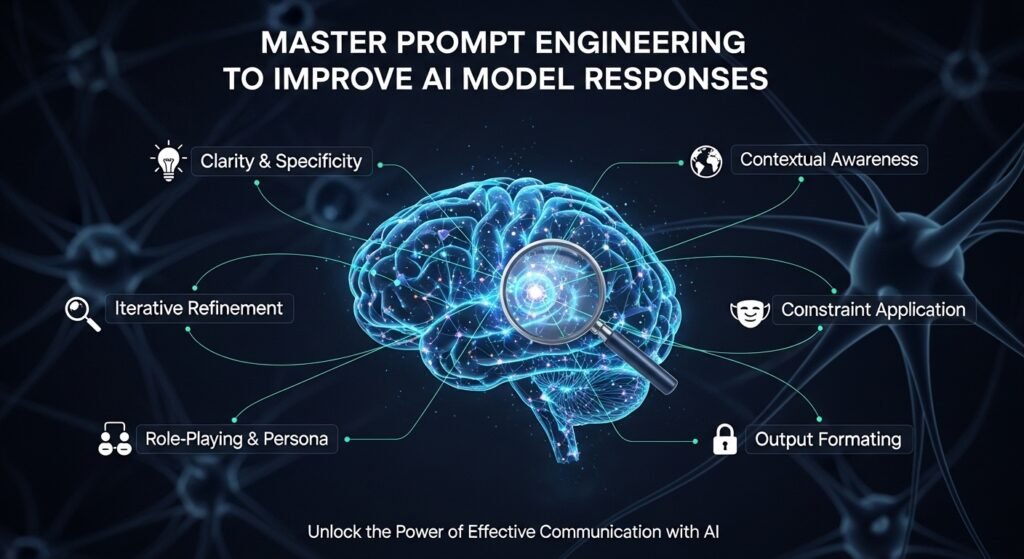

Generative AI refers to a subset that produces content—text, pictures, sounds, computer codes—rather than just classifying them. It is driven by models that are trained on very large datasets, sometimes involving deep learning.Usually, the method of generating language is via so-called large language models, whose job is to predict the next token (usually a short piece of text) depending on the previous tokens.

In simplest words, the process is like: you look at a sentence, and then you predict the next word. However, you do not “get a fact from a document” because the model is not a document retrieval system unless you link it to one.Since the model has learned enough patterns for imitation, it will be able to create a letter, a policy draft, or explain an idea in no time.On the other hand, it may also hallucinate because if the pattern is very weak or the prompt is too ambiguous, it can fill the gaps with well-sounding guesses. Good systems attempt to mitigate this by attaching the model to: trusted sources, providing citations, adhering to strict formatting and safety filters.Generative AI is fantastic for writing and summarizing tasks. But when it comes to serious factual matters, one must exert control on it.If you are asking for an oracle, you are deluding yourself in a big way.

Benefits: What AI actually improves (when used properly)

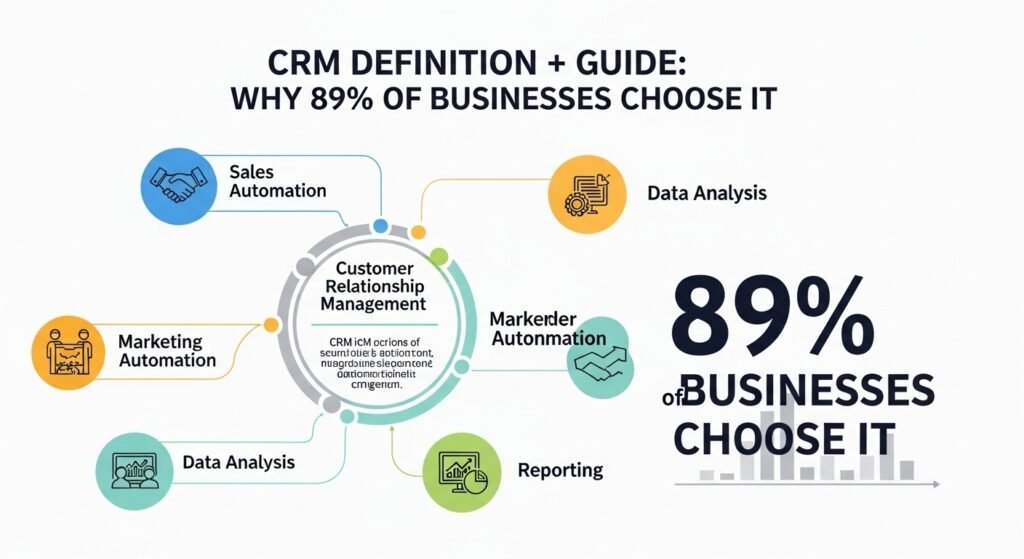

AI demonstrates its value when it saves time, reduces mistakes, and helps in quick decision-making with allowance for risk. In customer care and service, it can manage the incoming messages, suggest probable responses, delegate tickets, and locate the need of immediate intervention. It is hardly a small win. In operations, the possibilities of AI are to predict the product or service demand, discover fraudulent transaction patterns, and improve worker schedules. In the case of healthcare, AI can locate abnormal signals in images or summarize a doctor’s notes (with the collaboration of a medical professional). With respect to marketing, it can divide the clientele and invent new versions of the ad copies. And for programmers, it is at their service by assisting in code debugging and generation.

Nevertheless, the primary advantage, in truth, is the consistent output that can be relied upon. Humans get tired and less efficient when working on a long shift while AI remains the same. It can give you a result using the same process at 2 am as it does at 2 pm.Of course, the benefits depend on the boundaries. AI gives the best results with well-defined tasks and positive feedback loops.If one expects it to make the right decision without human intervention in a challenging situation, then disappointment is guaranteed.Use it where patterns are helpful. Retain humans where values and responsibility become the matter.

Examples: AI you’ve probably used in Australia without thinking

Your smartphone unlock by recognizing your face is using computer vision. It identifies your facial features and compares them to the stored representation. This is not the photographic face matching, but more pattern matching.Movie/TV series recommendations on Netflix, automized spam filter, clustering of shopping applications behavior accusing for you, are all different examples of machine learning in everyday life.

When you get your money from the ATM, fraud detection may kick in with the help of artificial intelligence to verify the transaction.The more machines get involved in the daily transactions, the more management of them becomes important. Therefore, an AI checker for customer service chat replies can flag and warn about the use of risky content.It is probably not surprising to learn that the world of big data and machine learning has entered the world of employment, that is job-span of trust and empathy being executed by humans, and even the contact center for customer service. At every step, more automation triggers fewer and fewer humans being engaged.Using a pair of earphones to listen to your favorite music is an indirect way of using AI.

Mistakes: Where people go wrong with AI

The first mistake is thinking AI is a plug-and-play brain. It’s not. It’s closer to a machine that needs the right fuel, tuning, and monitoring. Another mistake is using AI without clear success metrics. If you can’t measure response time, error rate, customer satisfaction, or cost reduction, you’ll end up arguing based on vibes.People also forget edge cases. A model may perform well on common situations but fail badly on rare ones. And rare ones are often the expensive ones.Over-automation is a classic. You replace too much human contact too early, customers feel ignored, and trust drops.

Privacy mistakes are another big one. Feeding sensitive customer info into tools without policies can create compliance risks.And then there’s prompt overconfidence—assuming one good output means the system is reliable. AI can be inconsistent. It needs testing, not vibes.AI is a system, not a feature. Treat it that way and you’ll do better.

AI vs rules vs humans (quick reality check)

Some tasks don’t need AI. They need a good rule. Others need a human. Here’s the clean comparison:

| Task Type | Rules-Based Automation | AI / ML | Human |

| Simple, consistent decisions | Great | Overkill | Fine |

| Pattern-heavy tasks (spam, recommendations) | Weak | Great | Slow |

| Emotional, sensitive conversations | Weak | Risky alone | Best |

| New edge cases and messy context | Breaks | Unstable | Best |

| High volume, repetitive work | Good | Great | Costly |

This isn’t “AI replaces everything.” It’s “AI replaces the right slice.”

Rules are underrated. Humans are essential. AI is the bridge for patterns at scale.

The smart teams use all three, depending on the moment.

And they monitor outcomes. Not once. Continuously.

That’s how you keep it useful.

Otherwise it becomes another tool that sounded good on a slide deck.

AI work checker

An ai work checker is usually a tool that tries to evaluate or verify something about work output. Sometimes it checks writing quality, originality, tone, or compliance. Sometimes it flags risky content in customer service replies.The important thing is understanding what it can and cannot do. Many “AI checkers” are really pattern detectors. They look for signals and estimate likelihood, not certainty. That means false positives happen. False negatives too.In business settings, AI checking is often better used as a quality assistant rather than a judge. For example, checking if a reply includes sensitive info, missing policy language, or a tone mismatch.

In education or HR contexts, it gets more complicated because the stakes are higher and the signals are messy. A checker can become unfair if treated like a truth meter.So the best practice is simple: use checkers for guidance, not final verdicts. Pair them with human review.That’s the balanced approach. Less drama. Better outcomes.

AI work schedule generator

An ai work schedule generator sounds fancy, but the goal is pretty practical: build rosters that meet staffing needs without burning people out.

These tools often combine forecasting (predict demand) with optimization (build schedules). Forecasting might use ML to estimate how busy certain hours will be. Optimization might use classic math to place people into shifts while respecting rules.

In Australia, schedule optimization matters a lot in retail, hospitality, healthcare, and call centres. Demand swings. Sick days happen. And labour costs are real. The “AI” part can help by spotting patterns humans miss—like recurring spikes after certain campaigns, weather changes, or seasonal events.

But it still needs constraints: award rules, availability, fairness, skill levels, and local requirements. The best schedule tools don’t just produce a roster. They explain why it makes sense and allow easy overrides. Because real life always overrides the plan.

Final Quick Wrap (without making it dramatic)

If you’ve been asking how does ai works, the simplest answer is: AI learns patterns from data, then uses those patterns to predict, decide, or generate outputs. That’s the core. Everything else—deep learning, NLP, generative AI—is just different ways of doing that at scale.

FAQ (People Also Ask)

1) How does AI work step by step?

It takes input data, learns patterns during training, then uses those patterns to make predictions or generate outputs. It improves through feedback and repeated adjustment.

2) What is the difference between AI and machine learning?

AI is the umbrella term. Machine learning is a common approach inside AI where systems learn from data rather than being hand-coded with fixed rules.

3) How does generative AI create text?

It predicts the next piece of text based on patterns learned from massive datasets. It’s generating likely continuations, not “looking up” truth unless connected to sources.

4) Can AI be wrong even if it sounds confident?

Yes. Confidence in wording is not proof. AI can hallucinate or misinterpret prompts, especially when information is unclear or outside its training patterns.

5) What are real examples of AI in everyday life?

Face recognition, spam filtering, recommendations on streaming/shopping apps, navigation predictions, fraud detection, and customer service routing.

6) Is AI replacing jobs in customer support?

It’s changing tasks more than replacing entire roles. AI handles repetitive questions and routing, while humans handle edge cases, empathy, and complex decisions.

7) What data does AI need to work well?

High-quality, relevant, representative data. Bad or biased data leads to bad or biased outputs, even if the model is advanced.

8) What’s the safest way to use AI in a business?

Use it for defined tasks, measure outcomes, keep humans in the loop for high-stakes decisions, and set privacy/security rules for data handling.

…and yeah, once you notice AI in your day, you start seeing it everywhere. Not in a scary way. More like background electricity. It’s just there, doing its thing, sometimes helpful, sometimes a bit weird, and you adjust as you go.