I keep hearing people use the phrase artificial intelligence like everyone knows what it means. They don’t. Half my mates think Siri counts and the other half think we’re five years from robots taking over. Both wrong. So I figured I’d write something that actually explains what is artificial intelligence without making your eyes glaze over.

Alright but what IS artificial intelligence really?

Picture this: you’ve got software that can do things we always assumed needed a human brain — recognising someone’s face in a photo, translating a menu when you’re overseas, or catching a weird transaction on your bank card at 2am. The machine isn’t actually “thinking,” though. Not the way you and I do. What it does is process huge amounts of data and detect patterns far faster than humans ever could. Some systems improve over time by learning from new information, while others simply follow rules programmed long ago. Technically, both fall under AI, which is exactly why the term can be so difficult to define. And when discussing newer branches like generative AI, the confusion becomes even greater because people often mix creative machine output with human-like intelligence.

Should I even bother learning about this?

Short answer — yes. AI is already making decisions that affect your life. Which emails get buried in spam. Whether a bank says yes to your home loan. Which job applicants make it past the first screen. That’s happening today with or without your input. The people around you at work are figuring this out quickly. You don’t need a computer science degree. But understanding the basics means you can smell BS when a company slaps “AI-powered” on a mediocre product. That alone makes it worthwhile.

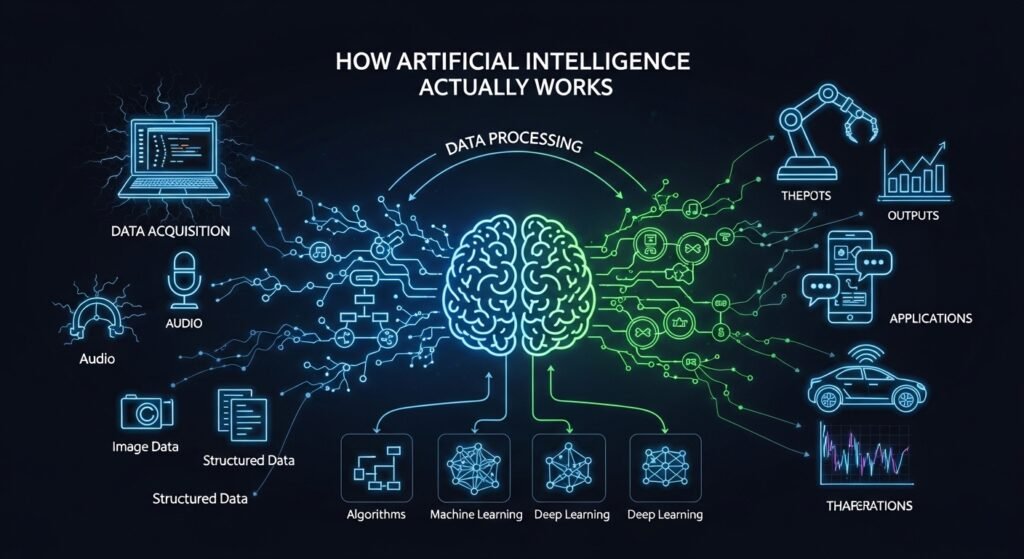

How Artificial Intelligence Actually Works — the Short Version

Right so at the base of most AI you’ve got this thing called machine learning. Instead of a programmer writing rules for every scenario, you feed the software a heap of data and let it figure out patterns itself. Take spam filters. Nobody wrote a rule for every scam email that exists. They showed the system millions of flagged emails and let it work out what they had in common. That’s machine learning in a nutshell. There’s supervised learning where you label everything. Unsupervised where the system groups things on its own. And reinforcement learning where it tries stuff, gets a score, and keeps adjusting. Each works better for different problems. Choosing wrong wastes months.

Then there’s deep learning. Machine learning but with way more layers stacked inside the network. Dozens or hundreds of processing layers deep. That extra depth lets these models deal with messy stuff like photos, voice recordings, raw text. Before 2012, computers were honestly bad at recognising what was in a picture. Deep learning came along and within a few years they were outperforming humans on certain tests. Wasn’t some genius breakthrough. Mostly just more data plus faster chips plus deeper networks. Simple recipe, wild outcome.

Different Types of AI — What Exists and What Doesn’t Yet

Here’s what trips people up. Researchers talk about three levels. Narrow AI is number one and the only kind that’s real right now. Your phone’s face unlock. Google Maps predicting traffic. Spotify knowing your taste before you do. All narrow. Brilliant at one task, useless at anything else. Then general AI — a machine that reasons across any topic like a person does. Doesn’t exist. Despite what some overconfident founders on Twitter claim. Super AI? Something smarter than every human combined. Science fiction for now, probably for a long time. But narrow doesn’t mean weak. A narrow AI beat the world’s best Go player. It just can’t make you a cup of tea after.

Types of AI Models — Under the Hood

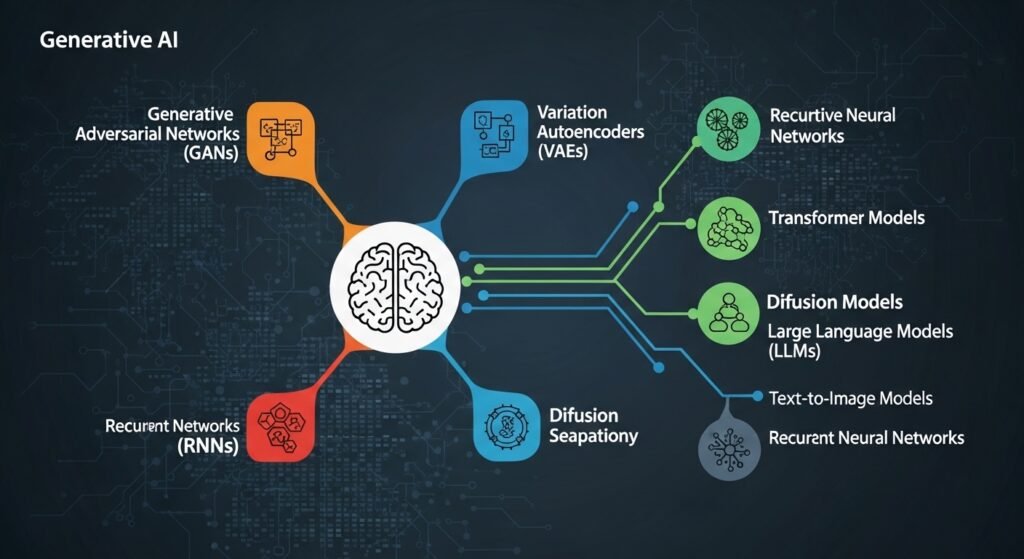

So if you’re wondering what actually powers these tools, it comes down to different model architectures. Neural networks run data through layers of connected nodes — loosely inspired by how the brain works, though that analogy gets stretched pretty thin honestly. Transformers are the big deal right now. They’re what’s behind ChatGPT, Claude, Gemini, all of them. They process language in parallel using something called attention mechanisms instead of reading word by word. Way faster, way better at context. Then you’ve got decision trees which are simpler — just branching if-then logic. Good for stuff like loan approvals where you need to explain the reasoning. GANs are interesting, two networks basically fighting each other to create realistic images. And reinforcement learning is the trial-and-error approach that powered AlphaGo. Different tools for different jobs.

| Model | How It Learns | Best For | Example |

| Neural Networks | Layered node processing | Images, speech | Google Photos |

| Transformers | Attention mechanisms | Text, translation | GPT, Claude |

| Decision Trees | If-then branching | Risk scoring | Loan systems |

| GANs | Two competing networks | Image synthesis | StyleGAN |

| Reinforcement | Trial and reward | Robotics, games | AlphaGo |

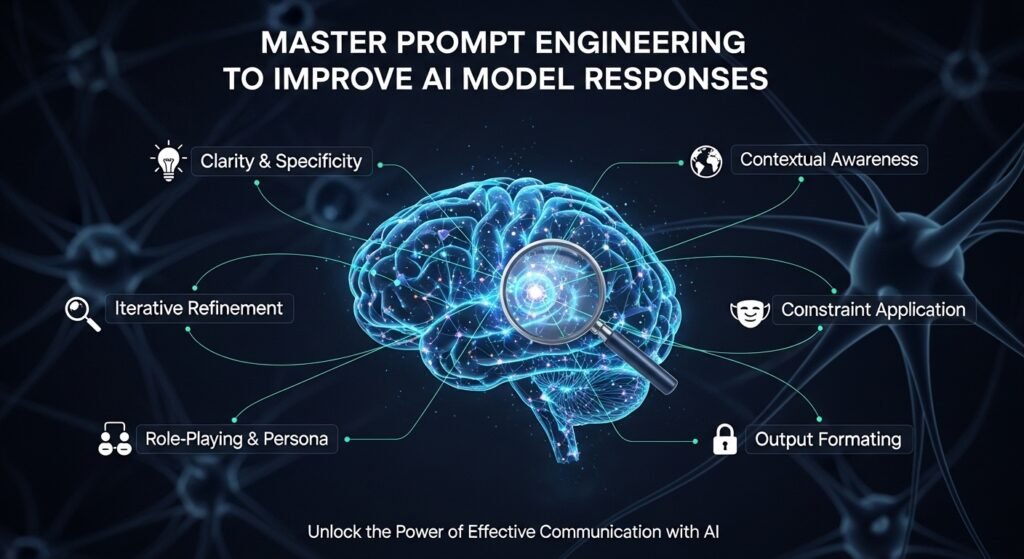

Types of Generative AI — Why Everyone Won’t Shut Up About It

I get why this one dominates the news. Generative AI doesn’t just sort things or predict outcomes. It creates stuff. Text, images, music, code. The big names are large language models like GPT and Claude for writing, diffusion models like Midjourney for images. Audio and video tools popping up every week now. What folks don’t realise is generative AI isn’t a separate branch. It’s built on top of deep learning. Same foundation, different trick. I’ve watched marketing teams go from days on a campaign to under an hour. Developers banging out boilerplate before lunch. But it hallucinates. Makes stuff up confidently. Outputs feel generic if you’re lazy with prompts. And copyright? Still a mess.

Natural Language Processing — How Machines Finally Started Understanding Us

NLP is probably the AI branch that touches your routine most and you barely clock it. Your phone finishing your sentence. A chatbot answering instead of a human. Google translating a webpage from Korean in two seconds flat. All NLP. And I remember when this stuff was genuinely awful. Before 2017 chatbots felt like talking to a brick wall. Then transformer architecture showed up and rewired the whole field. Now models track meaning across paragraphs, pick up sarcasm in reviews, summarise hundred-page contracts. Brands do sentiment analysis on social media with it. Law firms automate contract review. Companies run customer support at a scale that would’ve needed hundreds of staff a decade ago.

AI Agents — This Is Where Things Get Properly Interesting

So here’s the bit I’m most excited about. AI agents are a different animal from chatbots. A chatbot waits for your question. An agent goes and does things. Plans steps. Uses tools. Browses the web. You tell a chatbot you want Italian tonight — it gives you a list. You tell an agent and it checks your calendar, finds places with open tables, books one, and pings your phone with the details. The industry’s calling this shift agentic AI — moving from creating content to taking action. When a bunch of agents team up on complex tasks that’s agentic AI in full swing. Still rough around the edges. Agents misread instructions, get stuck in loops, do random things. But they’re improving fast. Google, Anthropic, Microsoft — all throwing serious money at this because whoever nails reliable agents first basically rewrites how work gets done.

Data — The Boring Part That Actually Decides Everything

Nobody writes exciting headlines about data quality. But here’s what most AI articles skip. Your model is only as good as what you feed it. Full stop. Shove biased data in, get biased results out. Leave gaps and the model develops blind spots it cannot fix. Feed it noisy info and outputs become unreliable. Every time. If someone’s pitching you an AI product, forget buzzwords and ask what data it trained on. That one question tells you more than any demo ever could.

AI Ethics and Governance — The Uncomfortable Conversation

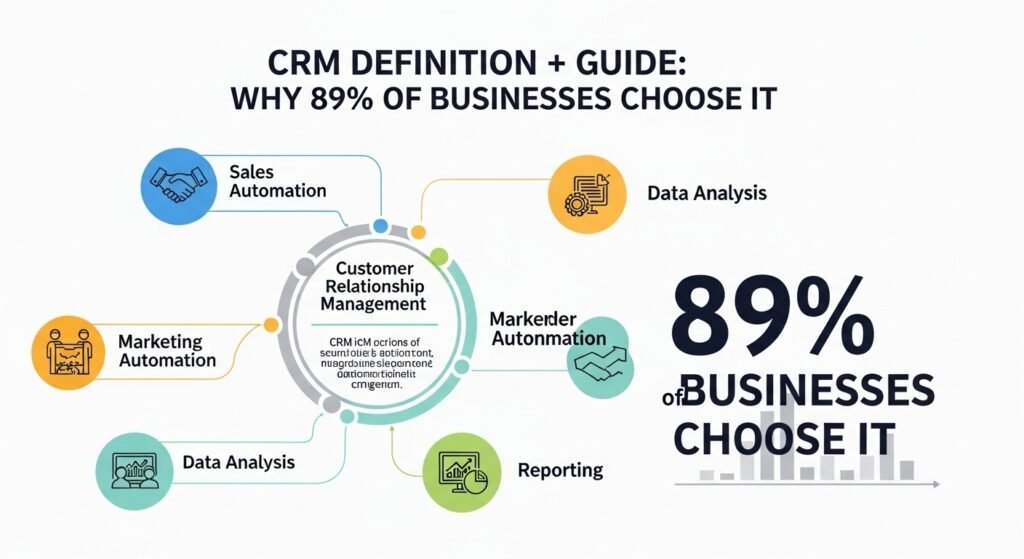

Not the fun part, I know. But you can’t write about AI in 2026 and skip it. AI systems soak up biases from their training data. And that causes real problems. Loan rejections based on postcode patterns that were basically proxies for race. Hiring tools filtering out women because old data was full of male hires. Facial recognition performing worse on darker skin. All documented, not theoretical. Responsible AI means explainability — tracing why the algorithm decided what it decided. Fairness audits before deployment. Security against attacks. Someone being accountable when things go wrong. The EU AI Act and GDPR are pushing companies forward but most are still scrambling honestly.

Quick History — Turing to ChatGPT

AI’s been around longer than people think. Turing asked if machines could think in 1950. Neural networks showed up in the late fifties then went dormant when funding dried up — the AI winters. IBM’s Deep Blue beat the chess champ in 1997. Watson won Jeopardy in 2011. Deep learning crushed image benchmarks from 2012. Then late 2022 — ChatGPT launched and suddenly your nan was asking about large language models over Christmas dinner. Now in 2026 it’s multimodal models handling text, images, audio all at once, plus smaller models running on phones instead of massive data centres.

Mistakes People Keep Making With AI

The one I see constantly? Lumping all AI together. A clunky chatbot from 2018 and a modern large language model might as well be different species. Another big one — thinking current AI is smarter than it is. Pattern matching? Incredible. Reasoning? Still terrible. People also forget data quality. And skipping the bias conversation because launch day is Tuesday isn’t a strategy. It’s how you end up in the news for the wrong reasons.

Frequently Asked Questions

What are the main types of AI?

Three levels. Narrow AI does specific tasks and is the only real one right now. General AI would match human-level thinking across every domain. Super AI would be beyond all of us combined. Last two are still theoretical and probably will be for a while.

Is machine learning the same as AI?

Nope. Machine learning is one piece of AI. All ML counts as AI but plenty of AI systems don’t use machine learning at all — some just follow pre-programmed rules with zero learning involved.

How is generative AI different from regular AI?

Regular AI sorts things into categories or predicts what might happen next. Generative AI makes new stuff from scratch — writes text, creates images, generates code. Same family tree, totally different output.

What do AI agents actually do?

They don’t just answer you — they go do things. Plan steps, use outside tools, handle complex tasks across multiple stages without needing you to micromanage every bit. Think of them as AI that takes action, not just AI that talks.

Can AI be biased?

100%. It picks up whatever biases are buried in the data it learned from. There are real documented cases in hiring, lending, and facial recognition. Some companies audit for this now. Many still don’t. That’s a problem.

Anyway that’s my take on all of this. Tried to keep it real and not repeat the same three definitions in ten different ways like most AI articles do. If anything here helped something click for you that didn’t before, that’s a win. This stuff moves fast — some of the details might look different by this time next year. But the big picture? That’s going to hold up for a good while I reckon.