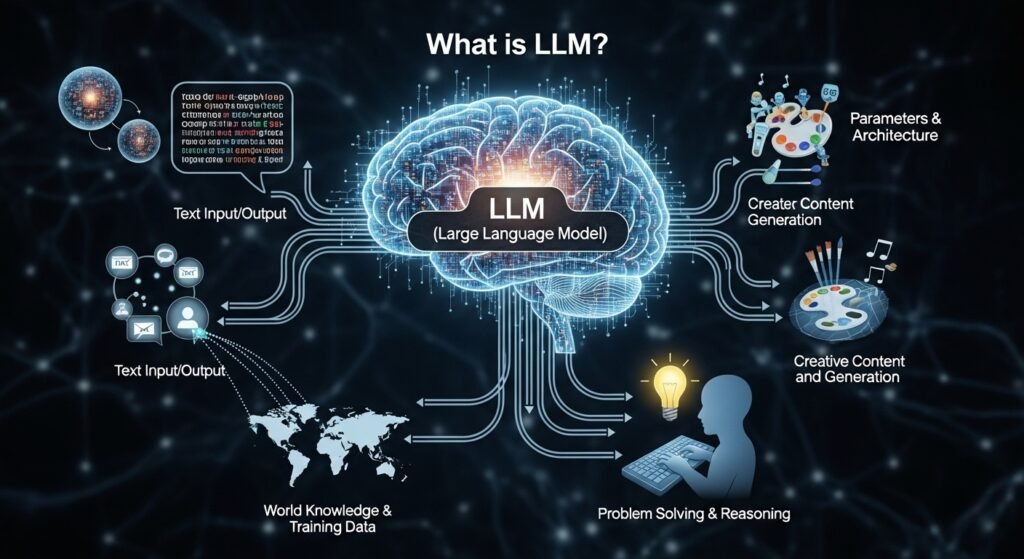

A large language model is an AI system trained on huge amounts of text so it can predict, generate, rewrite, summarise, and reason through language-like tasks. It does not “think” the way people do, but it can detect patterns in words at a scale that makes it useful for search, coding, support, writing, research, and a lot more. Today, most modern generative AI products sit on top of that basic idea.

What is a large language model?

It is a deep learning model, usually built on transformer architecture, trained on very large datasets so it can understand and generate text.

Is it worth using?

Yes, when the task involves drafting, coding help, summarising, search support, or question answering. Not always, though. Accuracy still depends on the model, the prompt, and the data around it.

How does it work?

At the core, it predicts the next token in a sequence, using patterns learned from massive text corpora and transformer-based self-attention.

When people first hear about this space, they usually start with the obvious question. What exactly are we talking about here. Is it a chatbot. A search engine. A writing tool. A coding assistant. The answer is a bit annoying because it is all of those things, depending on how the system is packaged.

A large language model is really the engine underneath. The visible product might be a chat app, a help desk bot, a developer tool, or an enterprise search assistant. Same underlying family, different use cases. That distinction matters because many articles flatten the topic too much. They explain the tech, but skip the practical part. Or they hype the practical part and barely explain the tech. Neither helps.

What Is LLM?

If you want the simple version, the phrase usually means a very large AI language model trained on an enormous amount of text so it can continue text, answer questions, summarise documents, translate content, and generate code. Cloudflare describes it as a type of AI program that can recognise and generate text, while AWS explains that these are deep learning models pre-trained on vast amounts of data and built on transformers. That overlap is important. The field uses different wording, but the underlying idea is stable.

The “meaning in AI” part is not just the acronym. It points to a broader shift in how software behaves. Traditional software follows explicit rules. A language model learns statistical patterns from data, then uses those patterns to respond in flexible ways. That is why it can handle messy, natural questions better than a rigid rules engine. Also why it sometimes sounds confident when it should slow down. The flexibility is the strength. It is also the risk.

Another thing people miss: size alone is not the whole story. Bigger models can be more capable, yes, but deployment quality, tuning, retrieval, safety layers, and evaluation matter just as much. A smaller model with strong retrieval can outperform a larger one on a company’s internal knowledge base. So the acronym may look technical and neat. The real-world picture is messier than that.

How It Works Without the Overcomplicated Version

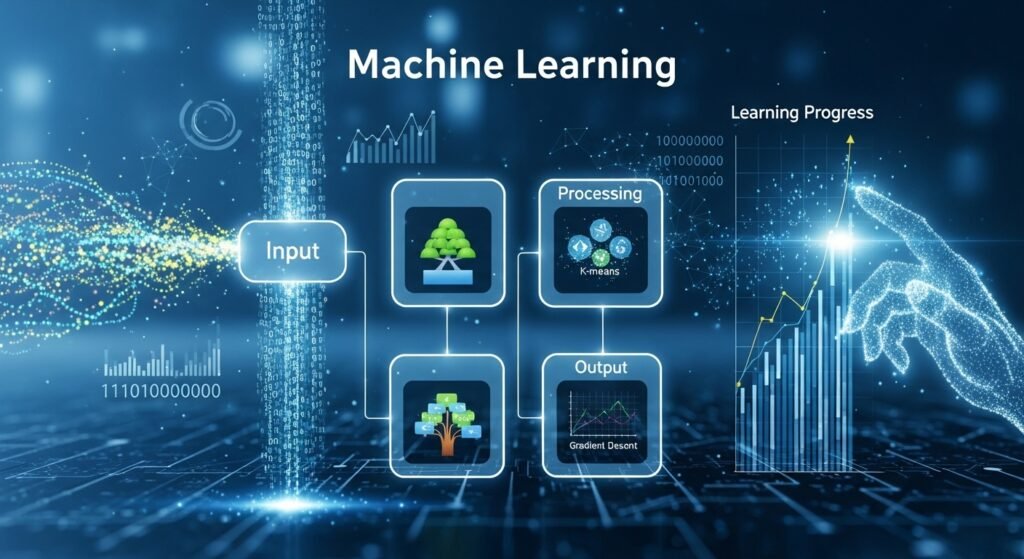

Under the hood, these systems are usually based on transformer architecture. AWS notes that transformers use self-attention to understand relationships between words and phrases, and they can process sequences in parallel rather than one token at a time the way older recurrent systems did. That parallelism is a big reason modern models scaled so fast. It made training on massive datasets more practical.

In practice, the model sees a sequence of tokens and learns to predict what comes next. Not magic. Prediction at massive scale. After enough training, that simple mechanism starts producing surprisingly useful behaviour: drafting emails, explaining code, answering questions, rewriting tone, translating content, even helping with analysis. Cloudflare’s explanation gets to the heart of it by framing deep learning as a probabilistic process over unstructured data. That sounds dry, but it explains why output feels fluid rather than rule-bound.

Then comes fine-tuning or task-specific optimisation. The base model may know a lot of general language patterns, but that does not automatically make it good for legal drafting, medical summarisation, or software engineering. Teams usually add instruction tuning, retrieval, safety controls, tool use, guardrails, or domain-specific prompting. That is where an average assistant becomes a useful one. Or doesn’t.

Why Large Language Models Matter More Than the Hype Cycle

The reason this technology matters is not that it can write a paragraph in three seconds. Plenty of flashy demos do that. The real shift is that one model can support many language-heavy tasks without being rebuilt from scratch every time. AWS highlights this flexibility clearly: the same model family can answer questions, summarise text, translate language, and complete sentences. That multi-purpose nature is what changed the market so fast.

For businesses, that means customer support, document search, workflow automation, internal knowledge access, and drafting tools are now easier to build than they were a few years ago. For individual users, it means faster writing, faster learning, and sometimes a strange feeling that software suddenly understands vague instructions. Not perfectly. But enough to change expectations.

Search is changing because of this too. People no longer only want links. They want synthesis. They want the first pass done for them. That shift is why large language models are now tied to search, copilots, assistants, and productivity platforms. Once users get used to natural-language interaction, going back to rigid inputs feels old quite quickly.

Benefits That Actually Matter in Real Use

The strongest benefit is speed. A good model can turn hours of rough drafting into twenty minutes of editing. That applies to content teams, analysts, support staff, researchers, and developers. The model does not remove expertise, but it reduces blank-page friction. For many teams, that is the first real win.

The second benefit is accessibility. A non-technical user can ask a plain-language question and still get something usable back. Cloudflare points out that these systems respond to unpredictable and unstructured queries, unlike traditional software that expects precise commands. That sounds small. It is not. It changes who gets value from software.

Then there is breadth. One model can power summarisation, Q&A, rewriting, extraction, translation, and chat. That does not mean it is best-in-class at everything. Still, the ability to serve many functions from one AI layer is commercially important. It lowers product complexity, at least at the interface level, even if the backend gets more demanding.

Where Things Break: Hallucinations, Confidence, and Context Gaps

This is the part that should always sit next to the hype. A language model can produce text that sounds clean, sure, polished, even authoritative, while being wrong. Sometimes slightly wrong. Sometimes very wrong. The problem is not only hallucination. It is presentation. The answer often arrives in a tone people trust.

One practical fix is retrieval-augmented generation. AWS defines RAG as a method that optimises output by letting the model reference an authoritative knowledge base outside its training data before generating a response. In plain terms, you stop asking the model to remember everything and instead let it fetch the right context at runtime. That tends to improve relevance and accuracy without retraining the whole model.

Even then, quality is not automatic. Bad retrieval, stale documents, weak chunking, and poor prompts still create weak outputs. So the mistake many teams make is blaming the model when the system around the model is the real issue. Fair enough. Sometimes the model is the issue too. But not always.

LLM Leaderboard and Benchmarks

People love a leaderboard because it feels decisive. Pick the top model, move on. Real life is not that tidy. Public ranking pages can help, but they measure different things and often blend provider-reported results with third-party evaluations. Vellum’s leaderboard, for example, says it shows current public benchmark performance for state-of-the-art model versions and focuses on more recent, non-saturated benchmarks rather than stale ones. That is useful, but it still needs interpretation.

Benchmarks matter because they create shared tests. Stanford’s HELM project is built around broad, transparent evaluation of foundation models rather than a single vanity score. That is a healthier direction. Different systems behave differently across reasoning, safety, accuracy, instruction following, and domain tasks, so one number rarely tells the full story.

Then there are task-specific evaluations like SWE-bench for software engineering. Its official site explains that SWE-bench Verified is a human-filtered subset and reports the percentage of instances solved. That kind of benchmark is far more useful for coding workflows than a vague “overall smartness” score. So when someone asks about leaderboards, the better answer is this: first decide the task, then choose the benchmark that reflects that task.

Here is a simple way to think about the landscape:

| Evaluation type | What it tells you | Why it matters |

| General capability leaderboard | Broad relative performance across mixed tasks | Good for quick market scanning |

| Holistic benchmark frameworks | Performance across multiple scenarios and risks | Better for nuanced model selection |

| Coding benchmarks | Ability to solve real software tasks | Useful for developer tools and agents |

| Retrieval-based evaluations | How well a system answers using external data | Important for enterprise search and internal docs |

| Human preference arenas | Which outputs people tend to prefer | Useful, but subjective |

That table is simple on purpose. Because people often overcomplicate evaluation talk and still end up picking on brand recognition alone.

Best LLM for Coding

This depends on what “coding” means in your workflow. Code completion inside an editor is not the same thing as debugging across a large repo. Nor is that the same as acting like an autonomous software agent. SWE-bench exists because ordinary coding demos do not test real maintenance work very well. The benchmark focuses on issue resolution in real repositories, which is a better proxy for serious engineering support.

If you are comparing models for coding, you should look at software-engineering evaluations, tool-use performance, latency, context window, and cost. A model that writes tidy snippets may still struggle with repo navigation, test repair, or multi-file reasoning. This is where public coding leaderboards can help, but only if you read the fine print. Vellum’s coding leaderboard and SWE-bench style measurements are more practical than generic chatbot rankings for developer use.

For teams, the best model is often not the one with the highest raw score. It is the one that fits the stack, handles longer context well enough, integrates with tools, and does not blow up cost at production scale. That answer is less dramatic than “use the smartest one.” Still true. Usually more useful too.

Using a Large Language Model Online

Most people first interact with this technology online through chat interfaces, AI search tools, writing assistants, coding copilots, or embedded support bots. The convenience is obvious. You open a browser, paste text, ask a question, and get a workable answer. For individuals, that low-friction access is why adoption happened so fast.

But online access changes the risk profile as well. You need to think about privacy, data retention, source grounding, and whether the tool is meant for casual public use or business workflows. An online assistant may be fine for brainstorming headlines. It may be the wrong place for confidential financial data, contracts, or private client material. That part still gets ignored far too often.

The other thing worth saying is that an online interface is not the same as a production-grade AI system. A polished chat box can hide weak retrieval, weak governance, or vague source handling. So yes, online tools are convenient. Just do not confuse convenience with reliability.

Real Examples So the Topic Feels Less Abstract

A retailer might use a language model to summarise customer reviews and extract recurring complaints. A law firm might use one for first-pass document classification, though with very careful review. A software company might pair one with repo access, ticket context, and tests to help engineers move faster. A university might use one to answer common admin questions from a verified knowledge base.

In customer support, the model can draft responses, triage intent, and route tickets. In marketing, it can help with ideation, rewrites, and search-focused content structure. In engineering, it can explain unfamiliar code or propose fixes. Cloudflare even points to use cases like customer service, chatbots, online search, and coding support, which lines up with how the market is actually using these systems today.

The more grounded pattern is this: language model plus context plus workflow equals value. Model alone, dropped into a business without structure, usually creates noise before it creates leverage.

Common Mistakes People Make

One mistake is treating the model like a database. It is not. Ask it for exact facts without grounding and you may get plausible nonsense. Another mistake is expecting one prompt to work forever. Good outputs often come from an iterative setup, not a single heroic instruction.

A third mistake is choosing based on hype instead of task fit. A top-ranked general model may still be the wrong pick for cost-sensitive support automation or for a narrow internal knowledge base. This is where benchmark literacy matters. HELM exists partly because single-angle evaluation is too shallow, and AWS’s framing of RAG matters because many real applications depend on current external knowledge rather than frozen training data. And maybe the most expensive mistake of all: no human review loop. People assume that because the output sounds professional, it is production-ready. Sometimes it is. Often it needs editing, validation, or policy checks. That does not make the tool useless. It just means adults still need to stay in the room.

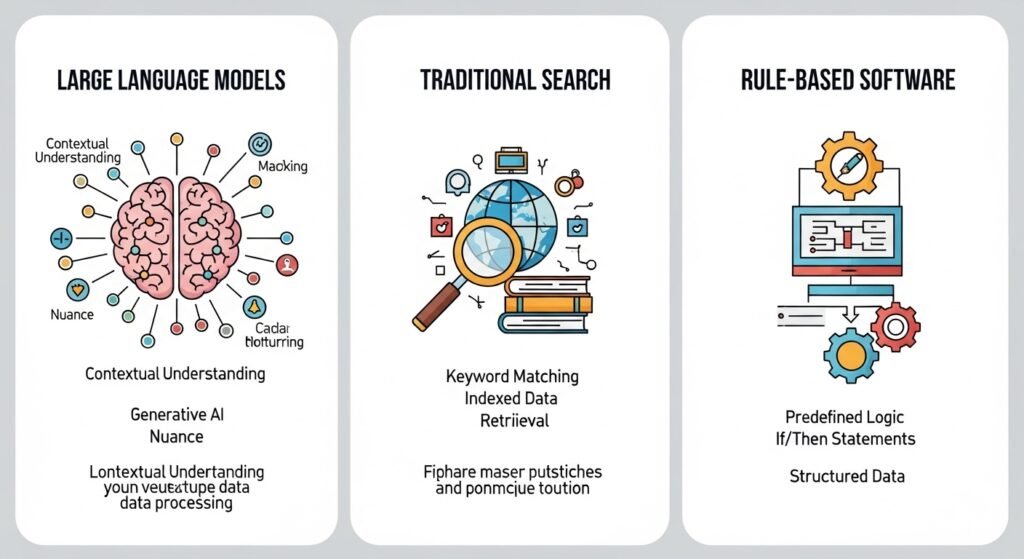

Large Language Model vs Traditional Search vs Rule-Based Software

A traditional search engine finds documents. A language model can synthesise an answer. Rule-based software follows explicit logic. A language model handles fuzzier inputs and ambiguous wording. That is the short comparison. The longer one is more interesting.

Traditional search is usually stronger when you need traceable sources and broad document retrieval. Rule-based systems are stronger when the process must be deterministic. Language models are stronger when the task is linguistic, variable, and messy. So the best systems often mix all three. Search retrieves. Rules constrain. The model interprets and writes. That hybrid pattern is where things are heading. Not because it sounds clever, but because each component covers a weakness in the others.

Where the Topic Is Going Next

The direction is pretty clear. Better retrieval. Better agent workflows. More realistic evaluations. More domain-specific deployment. Less obsession with raw size, more focus on system quality. The leaderboard culture will stay, because people love rankings, but selection will keep moving toward practical fit rather than pure bragging rights. Benchmarking will also get more specialised. General chat preference scores are not enough for law, medicine, finance, support, or engineering. That is why projects like HELM and SWE-bench matter. They push evaluation closer to real use. Still imperfect. Still evolving. But better than one giant score that pretends every task is the same.

And for regular users, the biggest change may be boring in the best way. These models will just become part of software. Less of a spectacle. More of a layer. Like search, spellcheck, cloud sync. Quietly everywhere.

FAQs

What is a large language model in simple words?

It is an AI system trained on huge amounts of text so it can understand prompts and generate useful language-based output such as answers, summaries, rewrites, and code. Most modern generative AI tools rely on this kind of model underneath.

How do large language models actually work?

They learn patterns from massive datasets and predict the next token in a sequence using transformer architecture and self-attention. After that, many systems add fine-tuning, retrieval, and safety controls to make responses more useful in real tasks.

Are language models the same as search engines?

No. A search engine retrieves documents or pages, while a language model generates or synthesises text. In many modern products, both are combined so the system can retrieve sources first and then produce a grounded response.

Which benchmark should you trust when comparing models?

There is no single best benchmark for every case. Use broad frameworks like HELM for a wider evaluation view, and task-specific benchmarks like SWE-bench when you care about software engineering performance. The benchmark should match the job you need done.

Is a large language model good for business use right now?

Yes, especially for drafting, support, internal knowledge access, summarisation, and coding assistance. But it works best when paired with retrieval, governance, testing, and human review rather than used as a free-floating answer machine.

That is really the shape of it. Not magic. Not useless either. Just a powerful language layer that gets impressive fast, messy fast, and valuable when someone sets it up properly. Then it starts feeling less like a trend and more like infrastructure.