When people discuss AI, they often describe it as machines that learn, software that adapts, or systems that predict. That description is not entirely wrong, but it misses a critical point about how intelligent AI agents for business actually function.

Artificial intelligence is not mainly about replacing human thinking. It is more about identifying patterns, processing huge amounts of information, and helping people make faster, more informed decisions. This is why understanding what is artificial intelligence matters before discussing intelligent automation tools or advanced applications.

Current AI systems do not “understand” in the same way humans do. They detect relationships, probabilities, and repeated signals across data. That difference matters because expectations shape outcomes. If people expect AI to think like a human, they will be disappointed. If they understand it as a system for pattern recognition and contextual support, its value becomes much clearer.

Key Insight: Data alone is not enough. Intelligen AI only becomes useful when it operates within a framework, a purpose, and a set of meaningful boundaries.

AI in Movies vs. Reality: Why the Gap Matters

Public imagination around AI was shaped heavily by films and futuristic storytelling. In those stories, AI often appears as emotional robots, sentient systems, or machines with hidden motives. Those narratives are usually about fear, control, and power more than real technology.

In daily life, AI is much less dramatic. It appears in recommendation engines, fraud alerts, content moderation, forecasting systems, and search tools. It works more like a silent assistant than a science fiction villain.

This everyday version of intelligent AI agents is closer to practical automation than the movie versions people often imagine.

The Real Risk: The bigger danger is not that machines become too human-like. The bigger risk is that humans assume machines understand more than they actually do. That gap between story and reality creates confusion, overconfidence, and poor decisions.

Co-Intelligence: Working Effectively With Intelligent AI Systems

Humans + AI = Better Outcomes

A more useful way to think about Intelligen AI is through co-intelligence. That means humans and AI working together instead of competing with each other.

Humans still provide judgment, ethics, direction, and context. AI brings speed, scale, pattern detection, and support with complexity. You can already see this partnership in:

- Writing assistance and content optimization

- AI-powered search and discovery

- Quick summaries and data interpretation

- Workflow assistance and automation

- Better data-driven decision making

This kind of partnership changes where human effort goes. People spend less time on repetitive analysis and more time on interpretation, decisions, and strategy. That is one reason intelligent automation tools are becoming more practical for businesses of all sizes.

Co-Intelligence Works When: People stay both curious and careful. Blind trust is dangerous, but fear is also limiting. The goal is not dependence. The goal is responsible collaboration.

What is Intelligen AI? Understanding Artificial Intelligence Fundamentals

AI Overview: What Artificial Intelligence Really Means

When people discuss AI, they often describe it as machines that learn, software that adapts, or systems that predict. That description is not entirely wrong, but it misses a critical point about how intelligent AI agents for business actually function.

Artificial intelligence is not mainly about replacing human thinking. It is more about identifying patterns, processing huge amounts of information, and helping people make faster, more informed decisions. This is why understanding what is artificial intelligence matters before discussing intelligent automation tools or advanced applications.

Current AI systems do not “understand” in the same way humans do. They detect relationships, probabilities, and repeated signals across data. That difference matters because expectations shape outcomes. If people expect AI to think like a human, they will be disappointed. If they understand it as a system for pattern recognition and contextual support, its value becomes much clearer.

Key Insight: Data alone is not enough. Intelligen AI only becomes useful when it operates within a framework, a purpose, and a set of meaningful boundaries.

AI in Movies vs. Reality: Why the Gap Matters

Public imagination around AI was shaped heavily by films and futuristic storytelling. In those stories, AI often appears as emotional robots, sentient systems, or machines with hidden motives. Those narratives are usually about fear, control, and power more than real technology.

In daily life, AI is much less dramatic. It appears in recommendation engines, fraud alerts, content moderation, forecasting systems, and search tools. It works more like a silent assistant than a science fiction villain.

This everyday version of intelligent AI agents is closer to practical automation than the movie versions people often imagine.

The Real Risk: The bigger danger is not that machines become too human-like. The bigger risk is that humans assume machines understand more than they actually do. That gap between story and reality creates confusion, overconfidence, and poor decisions.

Co-Intelligence: Working Effectively With Intelligent AI Systems

Humans + AI = Better Outcomes

A more useful way to think about Intelligen AI is through co-intelligence. That means humans and AI working together instead of competing with each other.

Humans still provide judgment, ethics, direction, and context. AI brings speed, scale, pattern detection, and support with complexity. You can already see this partnership in:

- Writing assistance and content optimization

- AI-powered search and discovery

- Quick summaries and data interpretation

- Workflow assistance and automation

- Better data-driven decision making

This kind of partnership changes where human effort goes. People spend less time on repetitive analysis and more time on interpretation, decisions, and strategy. That is one reason intelligent automation tools are becoming more practical for businesses of all sizes.

Co-Intelligence Works When: People stay both curious and careful. Blind trust is dangerous, but fear is also limiting. The goal is not dependence. The goal is responsible collaboration.

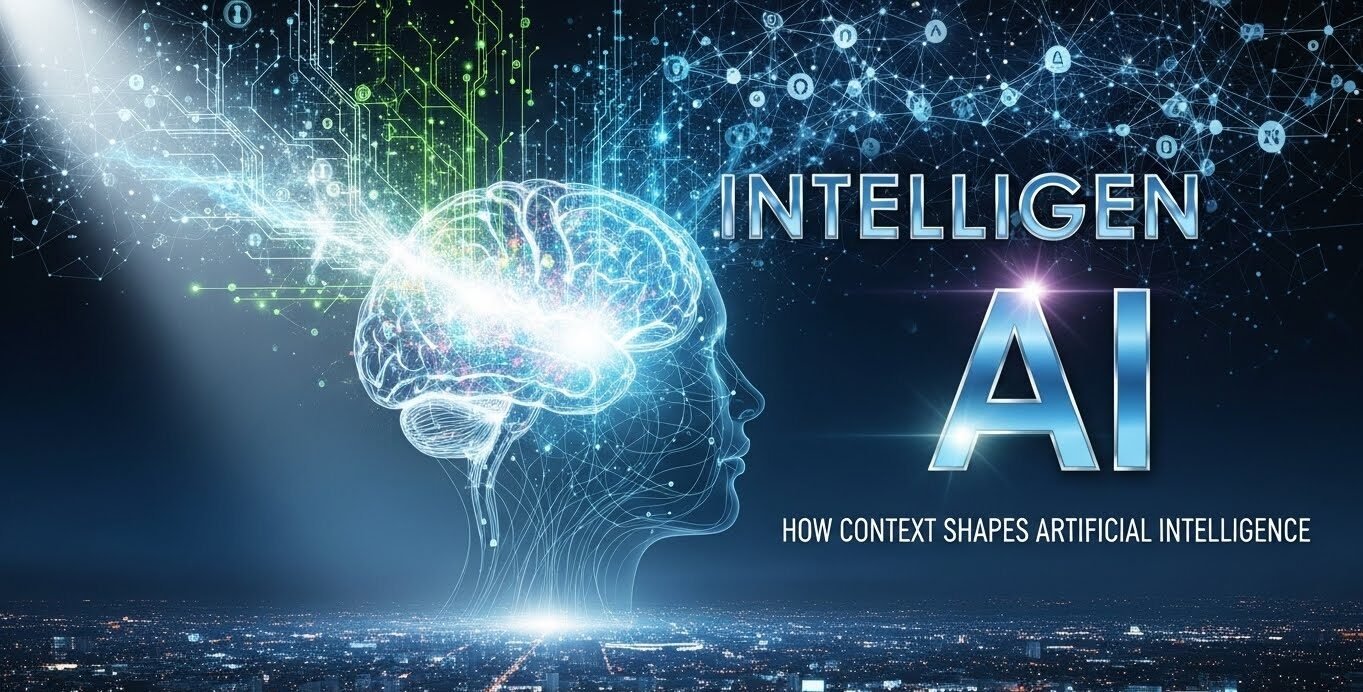

Intelligent Agents in AI: How They Actually Work in Practice

Breaking the Autonomy Myth

One common misunderstanding is that an intelligent agent in AI is fully independent and self-directed. In most cases, that is not true.

An intelligent agent usually works within clearly defined rules, goals, and limits. It observes conditions, responds to changes, and takes actions based on the environment it is designed for. It does not have secret intentions or personal ambitions. It is an optimization system operating inside boundaries.

Think of intelligent agents as specialized tools with guardrails, not autonomous entities with free will.

Common Business Uses of Intelligent Agents

In real organizations, AI agents for business typically:

Route Support Tickets

- Send customer queries to the correct team faster

- Improve customer care and service workflow efficiency

- Reduce manual sorting and human error

Adjust Pricing Dynamically

- Help businesses respond to demand changes

- React to competitive pressure in real time

- Optimize margins based on market conditions

Trigger Smart Inventory Decisions

- Recommend reordering based on sales velocity

- Analyze buying patterns and seasonal trends

- Prevent stockouts and overstock situations

Detect Unusual Activity

- Flag fraud, anomalies, and security risks

- Identify patterns humans might miss

- Alert teams before problems escalate

The Critical Factor: The effectiveness of these agents depends entirely on design quality. Poor boundaries lead to poor outcomes, often very quickly. Autonomy does not mean freedom. It means responsibility has shifted to the people who built, trained, and deployed the system.

AI Retail Business Intelligence: Making Faster, Smarter Decisions

Why Retail Needs Intelligent AI Systems

Retail is one of the clearest examples of practical AI application. Market conditions change constantly:

- Products go out of stock unexpectedly

- Customer behavior shifts with seasons and trends

- Demand can rise or drop with little warning

- Competitors respond in real time

AI retail business intelligence helps businesses process these changes faster than manual analysis alone. It looks for signals in transactions, customer activity, inventory movement, and behavioral trends. The value is not only in prediction—it is also in helping organizations respond with less guesswork and less emotional decision-making.

Better Decisions Under Pressure

Teams can make smarter calls about:

- Reordering inventory at optimal times

- Designing promotions that actually convert

- Making inventory cuts based on real data

- Understanding true category performance

What Actually Matters: Pretty dashboards are not the real advantage. The real advantage is reducing bad decisions made under pressure. When stakes are high and time is short, data-driven guidance backed by intelligent automation tools changes outcomes.

Understanding Customers Through AI Retail Intelligence

AI retail intelligence also helps businesses understand customers more clearly. It can analyze:

- Click behavior and browsing patterns

- Purchase timing and frequency

- Abandoned carts and hesitation signals

- Product comparison behavior

When used responsibly, this leads to better customer experiences:

- Customers see more relevant suggestions

- Product placement improves conversion

- Businesses waste fewer opportunities on poor targeting

- Personalization feels helpful, not invasive

The Ethical Line: There is a line between helpful personalization and invasive tracking. If that line is crossed, people feel watched instead of served. This is where human oversight matters most. Better technology does not automatically mean better judgment. Businesses still need people to decide what is fair, appropriate, and respectful.

AI Governance Framework: Why It Matters Now More Than Ever

Governance Is Not Optional—It’s Essential

As AI becomes more common, governance is no longer optional. It is a practical necessity for organizations of any size.

AI governance means putting clear rules, review systems, and accountability measures around how Intelligen AI is used. It is not about slowing innovation. It is about making sure systems behave responsibly in real-world settings.

Key Areas of AI Governance

An effective AI governance framework examines:

- Data sources: Where is information coming from? Is it reliable?

- Decision impact: Who is affected by this system’s outputs?

- Downstream effects: What happens when this decision cascades through other systems?

- Accountability: Who is responsible if something goes wrong?

- Fairness: Does this system treat all users equitably?

- Risk exposure: What could break? What could cause harm?

Why This Matters: Without governance, automation can easily scale bias, poor logic, and existing organizational problems instead of fixing them. That is why AI use should connect with strong risk management practices.

The Paradox: The more capable AI becomes, the more important human judgment becomes.

Why One-Size-Fits-All Governance Fails

Generic governance rules are often too broad to work well. Different industries face very different risks, responsibilities, and use cases. Healthcare, retail, logistics, finance, and software businesses all face different challenges.

Effective governance must fit the business context instead of being treated as a general compliance document.

What Good Governance Looks Like in Practice

Built Into Workflows

- Governance is part of daily operations, not added after deployment

- Teams understand their role in the oversight process

- Review happens naturally, not as an afterthought

Matched to Real Business Risk

- Rules reflect the actual impact of decisions

- Not focused only on theoretical concerns

- Scaled to match actual exposure

Easy for Teams to Follow

- If oversight feels unnatural or overly restrictive, teams will try to bypass it

- Clear guidelines that fit existing processes

- Support and training included

Focused on Reliability

- The purpose is not to block AI adoption

- The purpose is to make it safer, more consistent, and more trustworthy

- Enables confidence in system outputs

How Intelligen AI Integrates Into Everyday Work Systems

The Quiet Adoption: Why AI Spreads Unnoticed

One reason AI adoption moves quickly is that it often arrives quietly. It is built into tools people already use. It may appear in:

- Email sorting and priority management

- Meeting scheduling and calendar optimization

- Fraud monitoring and transaction review

- Content moderation and spam filtering

- Customer support systems and chatbots

- Automated reporting and insights

- Search assistance and knowledge discovery

The Adoption Pattern: Most people do not feel like they are adopting a major new technology. They feel like things are becoming faster, smoother, or easier.

This is one reason trust often develops before transparency. People accept the convenience first. They start asking deeper questions only when something goes wrong.

Where Trust Starts to Break—And How to Prevent It

Trust usually does not break because AI makes a mistake. Humans make mistakes too.

Trust breaks when:

- People cannot understand the outcome

- They question the underlying logic

- No one can identify who is responsible

- “The system decided” becomes a way to hide real decision-makers

- Accountability disappears

Why Explainability Is Non-Negotiable

Explainability is not a bonus feature. It is necessary for long-term acceptance.

People are far more likely to trust a system when they can understand why it produced an outcome, even if the outcome is not perfect. This is why organizations using Intelligen AI should care about communication just as much as technical performance.

The goal: Make AI decisions transparent enough that people understand the reasoning, even if they sometimes disagree with the result.

Understanding the Real Limits of AI Systems

Where Intelligent AI Still Struggles

AI can be powerful, but it still has clear limits. Intelligent automation tools struggle more with:

- Subtle judgment calls that require context

- Value-based decisions involving ethics or preference

- Rare edge cases and unusual situations

- Real-world complexity that doesn’t match training data

- Situations requiring genuine creativity or innovation

Why Biased Data Produces Biased Results

If the training data contains bias, the output can reflect that bias. If the assumptions are weak, the result can look confident while still being wrong. That is why strong AI systems are built with review, override, and continuous improvement in mind.

The Most Reliable Approach:

Organizations that succeed with AI do not treat it as flawless. They treat it as a tool that must be monitored, questioned, and refined over time. That mindset is much healthier than hype-driven adoption.

Regular audits, feedback loops, and human review keep systems honest and effective.

How Organizations Actually Adopt AI: The Real-World Pattern

It Usually Starts With a Problem, Not a Strategy

Most organizations do not begin with a grand AI strategy. They begin with a concrete problem:

- Too much data and not enough insight

- Repetitive decisions draining team resources

- Service quality that is inconsistent

- Forecasting that is too slow

- Manual processes that bottleneck growth

Intelligen AI enters the business as a practical fix, not as a futuristic statement.

How Adoption Expands Over Time

Over time, once the tool proves useful, adoption expands naturally:

- Phase 1: Solve a small, painful problem

- Phase 2: See results and expand the tool

- Phase 3: Ask larger questions about scale, governance, accountability

- Phase 4: AI shifts from being a tool to being a capability

This progression is healthy and sustainable because it builds confidence gradually.

What People Actually Worry About (And What Matters)

The Real Concerns Are Personal, Not Theoretical

Most people do not spend their days worrying about robot uprisings. Their real concern is usually more personal and immediate:

- Will my skills stay relevant?

- Will experience and judgment still matter when systems produce answers faster?

- Will AI support my work or slowly reduce my role?

- Can I compete with automation?

The Answer Depends on Culture, Not Just Software

Organizations that use AI as reinforcement tend to get better results than those using it only as replacement.

Reinforcement Model:

- AI handles repetitive work

- Humans focus on judgment, strategy, context

- Skills evolve rather than disappear

- Productivity increases across the team

Replacement Model:

- AI eliminates roles

- Humans compete with automation

- Organization loses institutional knowledge

- Fear grows faster than capability

That is why the future of Intelligen AI is not just technical. It is organizational and human.

Connecting Deeper: Related Concepts in AI

To fully understand Intelligen AI and its broader context, explore these related topics:

- What is Generative AI? — Understanding the difference between generative and other AI types

- Best AI Tools in 2026 — Practical applications and platforms for businesses

- What is Machine Learning in 2026? — The foundational technology behind intelligent systems

Each of these builds on the core concepts of how AI actually works in modern business contexts.

Where This Leaves Us: The Moment We’re In

We are not standing at one extreme or the other. We are somewhere between hype and habit, between fear and familiarity.

Intelligen AI systems are still evolving, and so is our understanding of them. That is normal and healthy.

What matters now is keeping the conversation open:

- Ask how systems work

- Challenge results when they seem wrong

- Improve boundaries to prevent problems

- Accept that human intelligence and artificial intelligence are not the same thing, even when they work side by side

That is how change usually becomes real. Quietly, gradually, and then all at once.

FAQs: Your Questions About Intelligen AI Answered

What does Intelligen AI actually mean?

It usually refers to practical, context-aware AI that supports decision-making rather than trying to fully imitate human intelligence. Intelligent AI agents solve real business problems through pattern recognition and optimization.

Is AI different from automation?

Yes. Automation follows fixed rules and workflows. AI works more flexibly by identifying patterns, using feedback, and adjusting outputs within its defined scope. This is why intelligent automation tools are more adaptable than traditional automation.

Are intelligent agents fully autonomous?

Not always. Most AI agents for business operate within human-designed limits and depend heavily on good goals, clean inputs, and clear constraints. Autonomy does not mean independence from oversight.

Why is an AI governance framework important now?

Because AI decisions increasingly affect real people, businesses, and systems. Governance helps ensure fairness, accountability, and trust. Without it, biases and problems can scale quickly.

Will AI replace human decision-making entirely?

That is unlikely in the strongest real-world use cases. The most effective systems still rely on human judgment, ethics, and context. The future is co-intelligence, not replacement.

How should my organization approach AI adoption?

Start with a real problem, not a technology strategy. Prove value in a small area, then expand. Build governance into the process from the beginning. Focus on reinforcement, not replacement.